Credit: Rob Dobi

Generative artificial intelligence, including large language models such as ChatGPT and image-generation software such as Stable Diffusion, are powerful new tools for individuals and businesses. They also raise profound and novel questions about how data is used in AI models and how the law applies to the output of those models, such as a paragraph of text or a computer-generated image.

“We’re witnessing the birth of a really great new technology,” said Regina Sam Penti, SB ’02, MEng ’03, a law partner at Ropes & Gray who specializes in technology and intellectual property. “It’s an exciting time, but it’s a bit of a legal minefield out there right now.”

At this year’s EmTech Digital conference, sponsored by MIT Technology Review, Penti discussed what users and businesses should know about the legal issues surrounding generative AI, including several pending U.S. court cases and how companies should think about protecting themselves.

AI lawsuits

Most lawsuits about generative AI center on data use, Penti said, “which is not surprising, given these systems consume huge, huge amounts of data from all corners of the world.”

One lawsuit brought by several coders against GitHub, Microsoft, and OpenAI is centered on GitHub Copilot, which converts commands written in plain English into computer code in dozens of different coding languages. Copilot was trained and developed on billions of lines of open-source code that had already been written, leading to questions of attribution.

“For people who operate in the open-source community, it’s pretty easy to take open-source software and make sure you keep the attribution, which is a requirement for being able to use the software,” Penti said. An AI model like the one underpinning Copilot, however, “doesn’t realize that there are all these requirements to comply with.” The ongoing suit alleges that the companies breached software licensing terms, among other things.

In another instance, several visual artists filed a class-action lawsuit against the companies that created the image generators Stable Diffusion, Midjourney, and DreamUp, all of which generate images based on text prompts from users. The case alleges that the AI tools violate copyrights by scraping images from the internet to train the AI models. In a separate lawsuit, Getty Images alleges that Stable Diffusion’s use of its to train models infringes on copyrights. All of the images generated by Stable Diffusion are derivative works, the suit alleges, and some of those images even contain a vestige of the Getty watermark.

There are also “way too many cases to count” centered on privacy concerns, Penti said. AI models trained on internal data could even violate companies’ own privacy policies. There are also more niche scenarios, like a case in which a mayor in Australia considered filing a defamation lawsuit against ChatGPT after it falsely claimed that he had spent time in prison.

While it’s not clear how legal threats will affect the development of generative AI, they could force creators of AI systems to think more carefully about what data sets they train their models on. More likely, legal issues could slow down adoption of the technology as companies assess the risks, Penti said.

Fitting old frameworks to new challenges

In the U.S., the two main legal systems for regulating the type of work generated by AI are copyrights and patents. Neither is easy to apply, Penti said.

Applying copyright law involves determining who actually came up with an idea for, say, a piece of visual art. The U.S. Copyright Office recently said that work can be copyrighted in cases where AI assisted with the creation; works wholly created by AI would not be protectable.

The patent office, which offers a stronger form of protection for intellectual property, remains vague on how patent law will apply to the outputs of AI systems. “Our statutory patent system is really created to protect physical gizmos,” Penti said, which makes it ill-equipped to deal with software. Decade by decade, the office has reconsidered what is and is not patentable. At present, AI is raising critical questions about who “invented” something and whether it can be patented.

“In the U.S., in order to be an inventor, at least based on current rules, you have to be a human, and you have to be the person who conceived the invention,” Penti said. In the case of drug development, for example, a pharmaceutical company could use AI to comb through millions of molecular prospects and winnow them down to 200 candidates and then have scientists refine that pool to the two best possibilities.

“According to the patent office, or according to U.S. invention laws, the inventor is the one that actually conceived those molecules, which is the AI system,” Penti said. “In the U.S., you cannot patent a system that’s created using artificial intelligence. So you have this amazing way to really significantly cut down the amount of time it takes to get from candidate to drug, and yet we don’t have a good system for protecting it.”

Given how immature these regulatory frameworks remain, “contracts are your best friend,” Penti said. Data is often seen as a source of privacy or security risk, she added, so data isn’t protected as an asset. To make up for this, parties can use contracts to agree on who has the right to different intellectual property.

But “in terms of actual framework-based statutory support, the U.S. has a long way to go,” Penti said.

What a murky legal landscape means for companies

The legal issues accompanying generative AI have several implications for companies that develop AI programs and those that use it, Penti said.

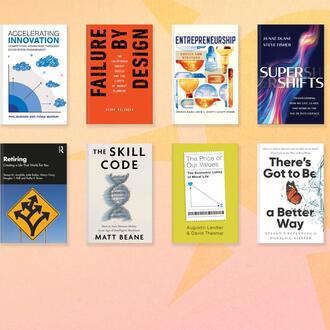

Related Articles

Developers might need to get smarter and more creative about where they get training data for AI models, Penti said, which will also help them avoid delays caused by a lack of clarity around what’s permissible.

Companies beginning to integrate AI into their operations have several options to reduce legal risk. First, companies should be active in their due diligence, taking actions such as monitoring AI systems and getting adequate assurances from service and data providers. Contracts with service and data providers should include indemnification — a mechanism for ensuring that if a company uses a product or technology in accordance with an agreement, that company is protected from legal liability.

“Ultimately, though, I think the best risk-mitigation strategy is proper training of employees who are creating these systems for you,” Penti said. “You should ensure they understand, for instance, that just because something is out there for free doesn’t mean it’s free of rights.”

Read next: the argument for data-centric artificial intelligence