3 requirements for successful artificial intelligence programs

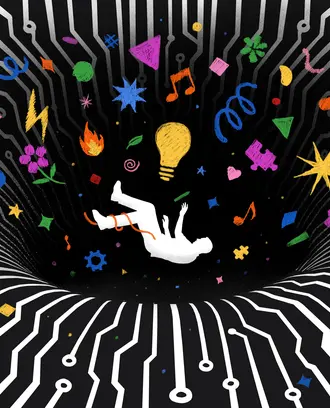

Many AI programs do not generate business gains. For successful deployment, make sure there’s scientific, stakeholder, and application consistency.

Companies around the world are expected to spend $97.9 billion on artificial intelligence by the end of 2023, but many AI initiatives fail or don’t turn a profit. A 2019 study found that 40% of organizations that make significant investments in AI do not report business gains.

Successful AI programs require an approach called AI alignment, according to a new research briefing from the MIT Center for Information Systems Research. Since 2019, CISR has investigated 52 AI solutions, which they define as applied analytics models that have some level of autonomy. Out of those, 31 have been deployed at a large scale.

CISR principal research scientist Barbara Wixom, University of Queensland lecturer Ida Someh, and University of Virginia professor Robert Gregory found that the successful AI programs achieve three interdependent states of consistency: scientific consistency, application consistency, and stakeholder consistency.

Scientific consistency between reality and the AI model. AI programs have to be trained to represent reality, and successful models have to be accurate. To create scientific consistency, teams used comparing activities, that is, comparing the output of AI models with empirical evidence. If they discovered inconsistencies, AI teams corrected course by adjusting data, features, the algorithm, or domain knowledge.

For example, General Electric’s environment, health and safety team created an AI-enabled assessment that vets contractors hired by the company. The team went through the time-consuming process of building a training dataset, and reviewers looked over the machine-learning assessments. This led to model adjustments and retraining, which made the machine more accurate.

Application consistency between the AI model and the solution. An AI model doesn’t just need to be accurate, it also needs to achieve goals and avoid unintended consequences. AI deployment requires scoping activities, which look at impacts and consequences and adjust the model’s restrictions, boundaries, automation, and oversight if needed.

The Australian Taxation Office, a large government department, launched a new AI program that prompted taxpayers filing online to review an item. The program was intended to improve noncompliance by taxpayers, but it also had to pass scrutiny from regulators and meet the best interests of taxpayers and the government.

The department made sure the program reflected its principles, and designed the program so it did not engage in policing efforts. The behavioral analytics team developed gentle, respectful techniques to encourage productive behaviors when residents were filing a claim, according to the research brief.

And when using a neural network algorithm, the organization decided not to enable continuous learning for the neural network during a tax cycle; that way, results would stay consistent no matter when a tax claim was filed, and results could be replicated.

Stakeholder consistency between the solution and stakeholder needs. The program should generate benefits across a network of stakeholders like managers, frontline workers, investors, customers, citizens, and regulators. Consistency happens when an AI program creates value that stakeholders understand, support, and benefit from. A company should engage in value-creating activities to look at costs, benefits, and risks, and to fix any problems.

Related Articles

Satellogic, based in Buenos Aires, Argentina, combines proprietary satellite data and advanced analytics techniques to solve problems such as how to increase food production or efficiently generate energy. The company worked with a client, the Chilean holding company HoldCo, on an innovative way to predict crop location based on satellite-imagery technology.

When HoldCo’s internal agricultural advisors doubted the new approach was viable, Satellogic asked them for help with the model training process. The HoldCo specialists guided the Satellogic team in labelling satellite images, training the model, and validating model output.

Also key was a Satellogic domain expert who educated the client about the technical mechanics of the analytics and managed the “client last mile,” the gap between outcomes from the AI and HoldCo’s application.

Manage the forces that shape your AI program

Achieving alignment in all three areas is difficult because factors can change, the researchers write. For example, changing a setting can change an algorithm, the COVID-19 pandemic or other disasters can change stakeholder needs, and a change in one area can affect the other two.

Fundamentally, achieving AI alignment requires that leaders manage the forces that shape and are shaped by an AI model core which constantly evolves.

“Successful leaders will embrace the dynamism of and incorporate new activities that sustain AI solutions and create virtuous cycles of learning and adaptation,” the researchers write.