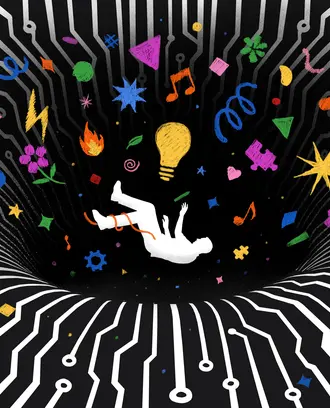

The AI road not taken

Advances in artificial intelligence are leading to job loss and increased surveillance. It’s not too late to change course.

Does this have to be the way? Artificial intelligence was supposed to boost productivity and create better futures in medicine, transportation, and workplaces. Instead, AI research and development has focused on only a few sectors, ones that are having a net negative impact for humanity, MIT economistargues in “Redesigning AI,” a Boston Review book.

“Our current trajectory automates work to an excessive degree while refusing to invest in human productivity; further advances will displace workers and fail to create new opportunities,” Acemoglu writes. AI also threatens “democracy and individual freedoms,” he writes.

“Government involvement, norms shifting, and democratic oversight” can set us on a better path, he argues.

Acemoglu’s lead essay in “Redesigning AI” is followed by short responses from AI researchers, labor advocates, economists, philosophers, and ethicists. They discuss economic inequality, possible futures for workers, and what could happen next in AI. In the excerpt below, Acemoglu explains why the path we’re on is not set and why the reflexive refrain of “But the market …” doesn’t hold up in the face of basic economics.

+++

Many decry the disruption that automation has already produced and is likely to cause in the future. Many are also concerned about the deleterious effects new technologies might have on individual liberty and democratic procedure. But the majority of these commentators view such concerns with a sense of inevitability — they believe that it is in the very nature of AI to accelerate automation and to enable governments and companies to control individuals’ behavior.

Yet society’s march toward joblessness and surveillance is not inevitable. The future of AI is still open and can take us in many different directions. If we end up with powerful tools of surveillance and ubiquitous automation (with not enough tasks left for humans to perform), it will be because we chose that path.

Where else could we go? Even though the majority of AI research has been targeted toward automation in the production domain, there are plenty of new pastures where AI could complement humans. It can increase human productivity most powerfully by creating new tasks and activities for workers.

Let me give a few examples. The first is education, an area where AI has penetrated surprisingly little thus far. Current developments, such as they are, go in the direction of automating teachers — for example, by implementing automated grading or online resources to replace core teaching tasks. But AI could also revolutionize education by empowering teachers to adapt their material to the needs and attitudes of diverse students in real time. We already know that what works for one individual in the classroom may not work for another; different students find different elements of learning challenging. AI in the classroom can make teaching more adaptive and student-centered, generate distinct new teaching tasks, and, in the process, increase the productivity of — and the demand for — teachers.

The situation is very similar in health care, although this field has already witnessed significant AI investment. Up to this point, however, there have been few attempts to use AI to provide new, real-time, adaptive services to patients by nurses, technicians, and doctors.

Similarly, AI in the entertainment sector can go a long way toward creating new, productive tasks for workers. Intelligent systems can greatly facilitate human learning and training in most occupations and fields by making adaptive technical and contextual information available on demand.

Finally, AI can be combined with augmented and virtual reality to provide new productive opportunities to workers in blue-collar and technical occupations. For example, it can enable them to achieve a higher degree of precision so that they can collaborate with robotics technology and perform integrated design tasks.

In all of these areas, AI can be a powerful tool for deploying the creativity, judgment, and flexibility of humans rather than simply automating their jobs. It can help us protect their privacy and freedom, too. Plenty of academic research shows how emerging technologies — differential privacy, adversarial neural cryptography, secure multiparty computation, and homomorphic encryption, to name a few — can protect privacy and detect security threats and snooping, but this research is still marginal to commercial products and services.

There is also growing awareness among both the public and the AI community that new technologies can harm public discourse, freedom, and democracy. In this climate many are demanding a concerted effort to use AI for good. Nevertheless, it is remarkable how much of AI research still focuses on applications that automate jobs and increase the ability of governments and companies to monitor and manipulate individuals. This can and needs to change.

The market illusion

One objection to the argument I have developed is that it is unwise to mess with the market. Who are we to interfere with the innovations and technological breakthroughs the market is generating? Wouldn’t intervening sacrifice productivity growth and even risk our technological vibrancy? Aren’t we better off just letting the market mechanism deliver the best technologies and then use other tools, such as tax-based redistribution or universal basic income, to make sure that everybody benefits?

The answer is no, for several reasons. First, it isn’t clear that the market is doing a great job of selecting the right technologies. It is true that we are in the midst of a period of prodigious technological creativity, with new breakthroughs and applications invented every day.

Yet Robert Solow’s thirty-year-old quip about computers — that they are “everywhere but in the productivity statistics” — is even more true today. Despite these mind-boggling inventions, current productivity growth is strikingly slow compared to the decades that followed World War II. This sluggishness is clear from the standard statistic that economists use for measuring how much the technological capability of the economy is expanding — the growth of total factor productivity.

TFP growth answers a simple question: If we kept the amount of labor and capital resources we are using constant from one year to the next, and only our technological capabilities changed, how much would aggregate income grow? TFP growth in much of the industrialized world was rapid during the decades that followed World War II and has fallen sharply since then. In the United States, for example, the average TFP growth was close to 2% a year between 1920 and 1970, and has averaged only a little above 0.5% a year over the last three decades. So the case that the market is doing a fantastic job of expanding our productive capacity isn’t ironclad.

The argument that we should rely on the market for setting the direction of technological change is weak as well. In the terminology of economics, innovation creates significant positive “externalities:” when a company or a researcher innovates, much of the benefits accrue to others. This is doubly so for technologies that create new tasks. The beneficiaries are often workers whose wages increase (and new firms that later find the right organizational structures and come up with creative products to make use of these new tasks). But these benefits are not part of the calculus of innovating firms and researchers. Ordinary market forces — which fail to take account of externalities — may therefore deter the types of technologies that have the greatest social value.

This same reasoning is even more compelling when new products produce noneconomic costs and benefits. Consider surveillance technologies. The demand for surveillance from repressive (and even some democratic-looking) governments may be great, generating plenty of financial incentives for firms and researchers to invest in facial recognition and snooping technologies. But the erosion of liberties is a notable noneconomic cost that it is often not taken into account. A similar point holds for automation technologies: it is easy to ignore the vital role that good, secure, and high-paying jobs play in making people feel fulfilled. With all of these externalities, how can we assume that the market will get things right?

Market troubles multiply further still when there are competing technological paradigms, as in the field of AI. When one paradigm is ahead of the others, both researchers and companies are tempted to herd on that leading paradigm, even if another one is more productive. Consequently, when the wrong paradigm surges ahead, it becomes very difficult to switch to more promising alternatives.

Related Articles

Last but certainly not least, innovation responds not just to economic incentives but also to norms. What researchers find acceptable, exciting, and promising is not purely a function of economic reward. Social norms play a key role by shaping researchers’ aims as well as their moral compasses. And if the norms within the research area do not reflect our social objectives, the resulting technological change will not serve society’s best interests.

All of these reasons cast doubt on the wisdom of leaving the market to itself. What’s more, the measures that might be thought to compensate for a market left to itself — redistribution via taxes and the social safety net — are both insufficient and unlikely to work. We certainly need a better safety net. (The COVID-19 pandemic has made that even clearer.) But if we do not generate meaningful employment opportunities — and thus a viable social purpose — for most people in society, how can democracy work? And if democracy doesn’t work, how can we enact such redistributive measures — and how can we be sure that they will remain in place in the future?

Even worse, building shared prosperity based predominantly on redistribution is a fantasy. There is no doubt that redistribution — via a progressive tax system and a robust social safety net — has been an important pillar of shared prosperity in much of the twentieth century (and high-quality public education has been critical). But it has been a supporting pillar, not the main engine of shared prosperity. Jobs, and especially good jobs, have been much more central, bolstered by productivity growth and labor market institutions supporting high wages.

We can see this most clearly from the experiences of Nordic countries, where productivity growth, job creation, and shared gains in the labor market have been the bulwark of their social democratic compact. Industry-level wage agreements between trade unions and business associations set an essentially fixed wage for the same job throughout an industry. These collective agreements produced high and broadly equal wages for workers in similar roles.

More importantly the system encouraged productivity growth and the creation of a plentiful supply of good jobs because, with wages largely fixed at the industry level, firms got to keep higher productivity as profits and had strong incentives to innovate and invest.

--

The direction of future AI and the future health of our economy and democracy are in our hands. We can and must act. But it would be naïve to underestimate the enormous challenges we face.

Excerpted from Redesigning AI edited by Daron Acemoglu. Copyright © 2021 by Daron Acemoglu. Published by Boston Review. All Rights Reserved.